It takes around 150 milliseconds (or about one sixth of a second) to blink your eyes. In other words, not long. That’s why you say something happened “in the blink of an eye” when an event passed so quickly that you were barely aware of it. Yet a new study shows that humans can process pictures at speeds that make an eye blink seem like a screening of Titanic. Even more, these results challenge a popular theory about how the brain creates your conscious experience of what you see.

To start, imagine your eyes and brain as a flight of stairs. I know, I know, but hear me out. Each step represents a stage in visual processing. At the bottom of the stairs you have the parts of the visual system that deal with the spots of darkness and light that make up whatever you’re looking at (let’s say an old family photograph). As you stare at the photograph, information about light and dark starts out at the bottom of the stairs in what neuroscientists called “low-level” visual areas like the retinas in your eyes and a swath of tissue tucked away at the very back of your brain called primary visual cortex, or V1.

Now imagine that the information about the photograph begins to climb our metaphorical neural staircase. Each time the information reaches a new step (a.k.a. visual brain area) it is transformed in ways that discard the details of light and dark and replace them with meaningful information about the picture. At one step, say, an area of your brain detects a face in the photograph. Higher up the flight, other areas might identify the face as your great-aunt Betsy’s, discern that her expression is sad, or note that she is gazing off to her right. By the time we reach the top of the stairs, the image is, in essence, a concept with personal significance. After it first strikes your eyes, it only takes visual information 100-150 milliseconds to climb to the top of the stairs, yet in that time your brain has translated a pattern of light and dark into meaning.

For many years, neuroscientists and psychologists believed that vision was essentially a sprint up this flight of stairs. You see something, you process it as the information moves to higher areas, and somewhere near the top of the stairs you become consciously aware of what you’re seeing. Yet intriguing results from patients with blindsight, along with other studies, seemed to suggest that visual awareness happens somewhere on the bottom of the stairs rather than at the top.

New, compelling demonstrations came from studies using transcranial magnetic stimulation, a method that can temporarily disrupt brain activity at a specific point in time. In one experiment, scientists used this technique to disrupt activity in V1 about 100 milliseconds after subjects looked at an image. At this point (100 milliseconds in), information about the image should already be near the top of the stairs, yet zapping lowly V1 at the bottom of the stairs interfered with the subjects’ ability to consciously perceive the image. From this and other studies, a new theory was born. In order to consciously see an image, visual information from the image that reaches the top of the stairs must return to the bottom and combine with ongoing activity in V1. This magical mixture of nitty-gritty visual details and extracted meaning somehow creates what we experience as visual awareness

In order for this model of visual processing to work, you would have to look at the photo of Aunt Betsy for at least 100 milliseconds in order to be consciously aware of it (since that’s how long it takes for the information to sprint up and down the metaphorical flight of stairs). But what would happen if you saw Aunt Betsy’s photo for less than 100 milliseconds and then immediately saw a picture of your old dog, Sparky? Once Aunt Betsy made it to the top of the stairs, she wouldn’t be able to return to the bottom stairs because Sparky has taken her place. Unable to return to V1, Aunt Betsy would never make it to your conscious awareness. In theory, you wouldn’t know that you’d seen her at all.

Mary Potter and colleagues at MIT tested this prediction and recently published their results in the journal Attention, Perception, & Psychophysics. They showed subjects brief pictures of complex scenes including people and objects in a style called rapid serial visual presentation (RSVP). You can find an example of an RSVP image stream here, although the images in the demo are more racy and are shown for longer than the pictures in the Potter study.

The RSVP image streams in the Potter study were strings of six photographs shown in quick succession. In some image streams, pictures were each shown for 80 milliseconds (or about half the time it takes to blink). Pictures in other streams were shown for 53, 27, or 13 milliseconds each. To give you a sense of scale, 13 milliseconds is about one tenth of an eye blink, or one hundredth of a second. It is also far less than time than Aunt Betsy would need to sprint to the top of the stairs, much less to return to the bottom.

At such short timescales, people can’t remember and report all of the pictures they see in an image stream. But are they aware of them at all? To test this, the scientists gave their subjects a written description of a target picture from the image stream (say, flowers) either just before the stream began or just after it ended. In either case, once the stream was over, the subject had to indicate whether an image fitting that description appeared in the stream. If it did appear, subjects had to pick which of two pictures fitting the description actually appeared in the stream.

Considering how quickly these pictures are shown, the task should be hard for people to do even when they know what they’re looking for. Why? Because “flowers” could describe an infinite number of photographs with different arrangements, shapes, and colors. Even when the subject is tipped off with the description in advance, he or she must process each photo in the stream well enough to recognize the meaning of the picture and compare it to the description. On top of that, this experiment effectively jams the metaphorical visual staircase full of images, leaving no room for visual info to return to V1 and create a conscious experience.

The situation is even more dire when people get the description of the target only after they’ve viewed the entire image stream. To answer correctly, subjects have to process and remember as many of the pictures from the stream as possible. None of this would be impressive under ordinary circumstances but, again, we’re talking 13 milliseconds here.

Sensitivity (computed from subject performance) on the RSVP image streams with 6 images. From Potter et al., 2013.

How did the subjects do? Surprisingly well. In all cases, they performed better than if they were randomly guessing – even when tested on the pictures shown for 13 milliseconds. In general, they scored higher when the pictures were shown longer. And like any test-taker could tell you, people do better when they know the test questions in advance. This pattern held up even when the scientists repeated the experiment with 12-image streams. As you might imagine, that makes for a very crowded visual staircase.

These results challenge the idea that visual awareness happens when information from the top of the stairs returns to V1. Still, they are by no means the theory’s death knell. It’s possible that the stairs are wider than we thought and that V1 is able (at least to some degree) to represent more than one image at a time. Another possibility is that the subjects in the study answered the questions using a vague sense of familiarity – one that might arise even if they were never overtly conscious of seeing the images. This is a particularly compelling explanation because there’s evidence that people process visual information like color and line orientation without awareness when late activity in V1 is disrupted. The subjects in the Potter study may have used this type of information to guide their responses.

However things ultimately shake out with the theory of visual awareness, I love that these intriguing results didn’t come from a fancy brain scanner or from the coils of a transcranial magnetic stimulation device. With a handful of pictures, a computer screen, and some good-old-fashioned thinking, the authors addressed a potentially high-tech question in a low-tech way. It’s a reminder that fancy, expensive techniques aren’t the only way – or even necessarily the best way – to tackle questions about the brain. It also shows that findings don’t need colorful brain pictures or glow-in-the-dark mice in order to be cool. You can see in less than one-tenth of a blink of an eye. How frickin’ cool is that?

Photo credit: Ivan Clow on Flickr, used via Creative Commons license

Potter MC, Wyble B, Hagmann CE, & McCourt ES (2013). Detecting meaning in RSVP at 13 ms per picture. Attention, perception & psychophysics PMID: 24374558

Humans learn about objects by exploring them. I

Humans learn about objects by exploring them. I

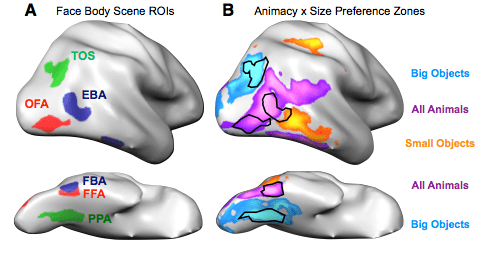

While we have five wonderful senses, humans rely most on our sense of sight. The allocation of real estate in the brain reflects this hegemony; a far greater chunk of your cerebral cortex is dedicated to vision than to any other sense. So when you encounter people, objects, and animals in the world, you typically use visual information to tell your lover from a toothbrush from your cat. And while it would be reasonable to expect your brain to process all of these items in the same way, it does nothing of the sort. Instead, the visual cortex segregates and plays favorites.

While we have five wonderful senses, humans rely most on our sense of sight. The allocation of real estate in the brain reflects this hegemony; a far greater chunk of your cerebral cortex is dedicated to vision than to any other sense. So when you encounter people, objects, and animals in the world, you typically use visual information to tell your lover from a toothbrush from your cat. And while it would be reasonable to expect your brain to process all of these items in the same way, it does nothing of the sort. Instead, the visual cortex segregates and plays favorites.

More than a century ago, scientists discovered something usual about how people with

More than a century ago, scientists discovered something usual about how people with